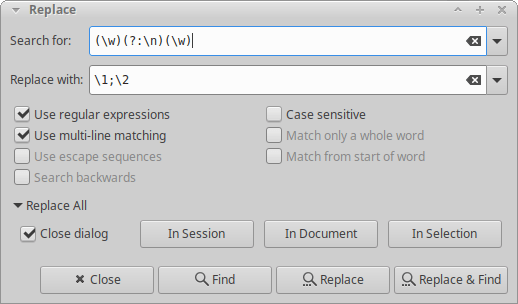

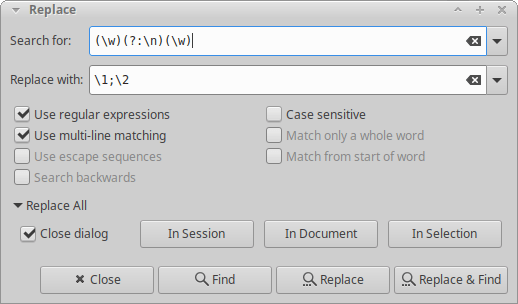

Geany has a nice story about named matching groups in the manual, but those don’t seem to work in the actual find & replace dialog. Instead, you can refer to them by number. Less convenient, but generally workable.

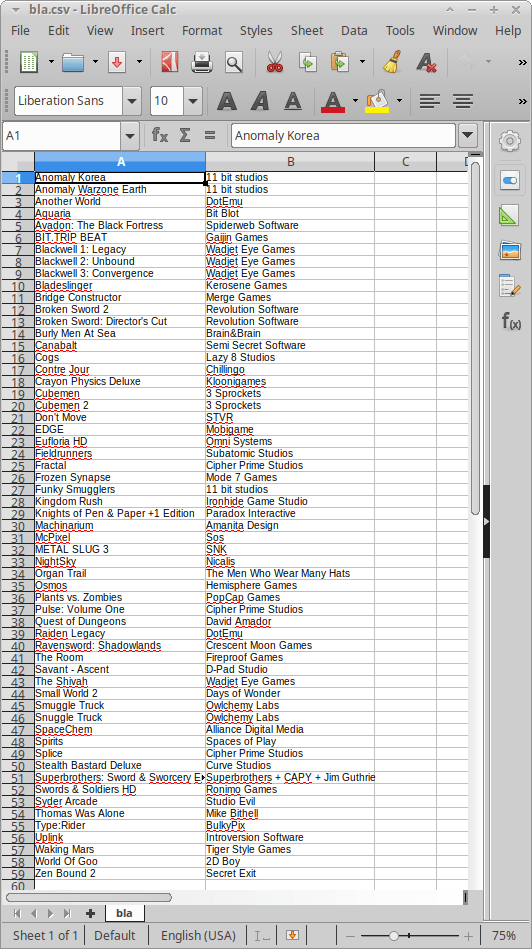

I’ll give you a concrete example. I copied my list of games from Humble Bundle to be able to examine them better locally. I didn’t see an obvious way, so I just copied them from the website (by deactivating the user-select: none CSS property). Long story short, I ended up with a list like this:

Anomaly Korea

11 bit studios

Anomaly Warzone Earth

11 bit studios

Another World

DotEmu

Aquaria

Bit Blot

Avadon: The Black Fortress

Spiderweb Software

BIT.TRIP BEAT

Gaijin Games

Blackwell 1: Legacy

Wadjet Eye Games

Blackwell 2: Unbound

Wadjet Eye Games

Blackwell 3: Convergence

Wadjet Eye Games

Bladeslinger

Kerosene Games

Bridge Constructor

Merge Games

Using the regex pictured above, that turns into this:

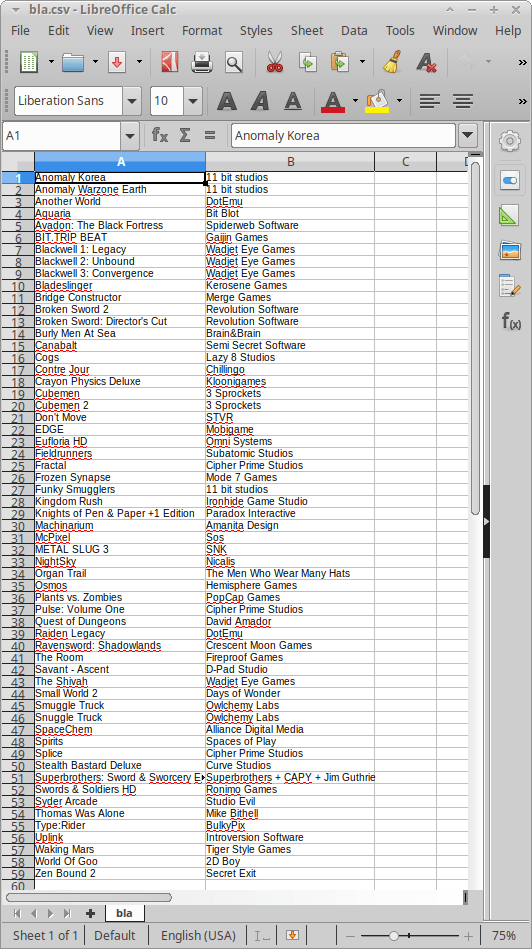

Anomaly Korea;11 bit studios

Anomaly Warzone Earth;11 bit studios

Another World;DotEmu

Aquaria;Bit Blot

Avadon: The Black Fortress;Spiderweb Software

BIT.TRIP BEAT;Gaijin Games

Blackwell 1: Legacy;Wadjet Eye Games

Blackwell 2: Unbound;Wadjet Eye Games

Blackwell 3: Convergence;Wadjet Eye Games

Bladeslinger;Kerosene Games

Bridge Constructor;Merge Games

After optionally stripping out the double newlines (replace \n\n with \n), you can save the file as a .csv and open it in Calc. And there you have it. My complete list of Android games: